Meta signs agreement with AWS to power agentic AI on Amazon's Graviton chips

Amazon AWS and Meta have expanded a long-running partnership: Meta will deploy AWS Graviton processors at very large scale to help power the CPU-intensive layers behind its AI stack—including the orchestration and services that sit around frontier models. GPUs remain central to training huge models, but agentic AI is pushing new demand for efficient, high-throughput compute for reasoning, search, code generation, and multi-step workflows.

Key takeaways

- Scale first: The deployment is described as starting with tens of millions of Graviton cores, with room to grow as Meta’s AI footprint expands.

- Top-tier Graviton footprint: Meta is positioned as one of the largest Graviton customers in the world—evidence that Arm-based cloud CPUs are now “main character” infrastructure, not a niche experiment.

- Bedrock and the broader AWS AI stack: The announcement frames the deal as building on Meta’s existing use of AWS services—including Amazon Bedrock at scale—for the next generation of AI products.

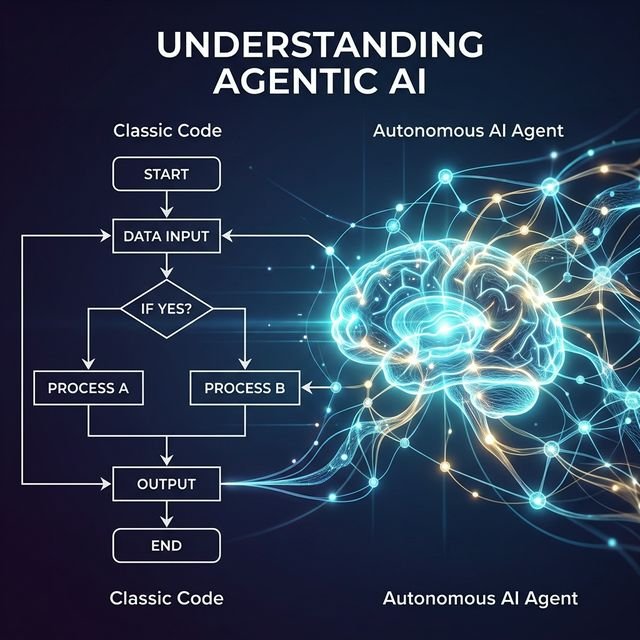

- Agentic workloads are hybrid: The story is not “CPUs replace GPUs”; it is that modern AI systems need both—accelerators for model math, and very strong general-purpose fleets for everything wrapped around it.

Why agentic AI changes the CPU calculus

Classic chatbots mostly moved tokens through a model. Agentic systems add loops: plan → call tools → verify → retry → aggregate context → enforce policies. At Meta’s traffic levels, those steps create billions of interactions that must be scheduled, authenticated, cached, and coordinated. That work is often CPU-shaped: parallelizable control planes, serialization, RPC fan-out, feature preparation, and low-latency glue between services.

AWS’s narrative is straightforward: if you want agentic experiences to feel instant and reliable, you need silicon and networking that are tuned for throughput per dollar and energy per useful operation, not only peak FP16 on a single box.

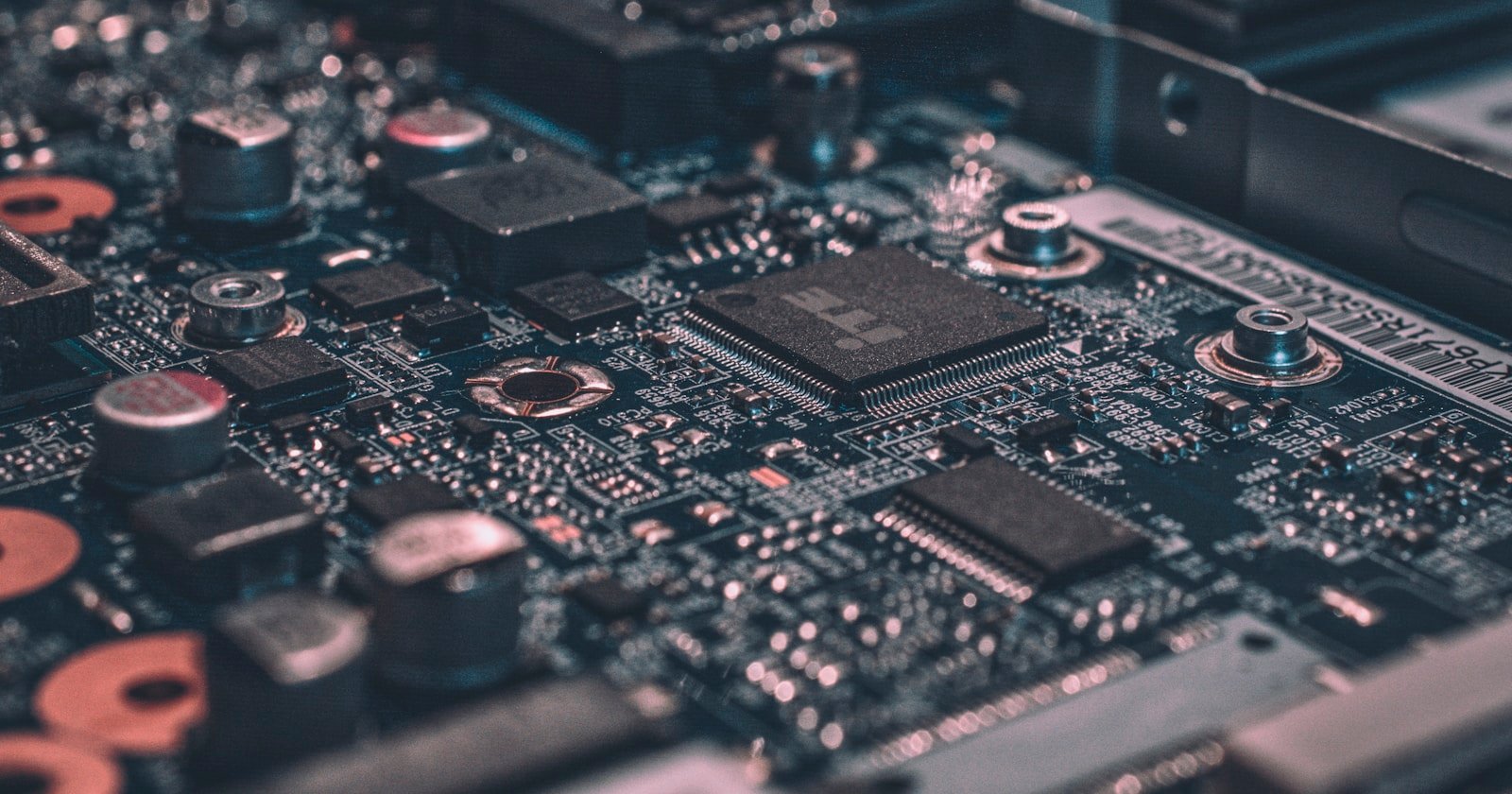

What AWS highlighted about Graviton5

Amazon’s materials emphasize Graviton5 as a purpose-built step forward—very high core counts, a substantially larger cache versus the prior generation (claimed to reduce cross-core communication delays by up to roughly a third in relevant paths), and platform integration aimed at predictable performance for data-heavy pipelines.

- 192 cores (as described in AWS’s announcement narrative) and a much larger cache budget tuned for data movement between cores.

- Up to ~25% better performance than the prior Graviton generation in AWS’s positioning—always validate on your own benchmarks.

- Built on a 3 nm-class process in AWS’s storytelling: smaller transistors generally help perf/Watt when the architecture is coherent end-to-end.

Nitro, EBS, ENA—and why networking still matters

Graviton instances on AWS sit on top of the Nitro System: dedicated hardware and firmware that offload virtualization, security, and storage/network paths so customers can get closer to bare-metal behavior while still using familiar cloud devices. For Meta, that matters because agentic stacks are distributed systems first—Elastic Network Adapter (ENA) paths, Amazon EBS volumes, and consistent VM semantics reduce surprises when fleets autoscale.

AWS also calls out support for Elastic Fabric Adapter (EFA) on relevant Graviton5 instance families—low-latency, high-bandwidth inter-node communication. That is the kind of capability you lean on when a single user session explodes into a parallel graph of tool calls and microservices that must complete under a tight tail-latency budget.

What leadership said (lightly edited for clarity)

Amazon: “This isn’t just about chips; it’s about giving customers the infrastructure foundation, as well as data and inference services, to build AI that understands, anticipates, and scales efficiently to billions of people worldwide,” said Nafea Bshara, vice president and distinguished engineer, Amazon—framing the partnership as silicon plus the full AWS AI stack.

Meta: “As we scale the infrastructure behind Meta’s AI ambitions, diversifying our compute sources is a strategic imperative. AWS has been a trusted cloud partner for years, and expanding to Graviton allows us to run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale,” said Santosh Janardhan, head of infrastructure, Meta.

Inference as a “building block” and the year ahead

AWS leadership has been vocal that inference—serving models and agents in production—is becoming a first-class design constraint for the industry. Task-accomplishing agents are not “just content generation”; they are stateful systems with budgets, policies, and retries. In practice, that pushes teams to engineer for cost curves and power curves as much as for leaderboard scores.

If you are building agentic products inside a company, the Meta–AWS story is a useful reminder: your unit economics live in the whole stack—not only the GPU cluster that trains the model.

Learn more (official + practical)

For the latest instance specs, migration notes, and performance claims, start with AWS’s Graviton overview and follow AWS “What’s New” posts for Graviton5 availability in your regions. If you want a structured path from fundamentals to production-style agentic systems, our Agentic AI Developer Bootcamp is built to help you reason about orchestration, evaluation, and deployment—not just prompting.

Disclaimer: Details of public cloud partnerships change quickly. Treat numbers, quotes, and generation names as time-stamped pointers—always confirm against official AWS and Meta communications before making procurement or architecture decisions.

Suggested reading

Frequently Asked Questions

If GPUs train the big models, why does Meta need more Graviton CPUs?

Training frontier models still leans heavily on GPUs, but production agentic systems spend enormous cycles on coordination, retrieval, tool calls, ranking, and real-time inference paths that are often CPU-bound at hyperscale. Purpose-built Arm CPUs like Graviton can improve performance per watt for those layers.

What is AWS Graviton5 in one sentence?

Graviton5 is AWS’s latest generation of custom Arm-based processors—announced with very high core counts, larger caches, and platform features (Nitro, EFA) aimed at throughput, latency, and secure cloud scale.

Where can I read the official AWS material on Graviton?

Start with AWS’s Graviton product pages and “What’s New” posts for the latest instance families, benchmarks, and migration guides. Always verify pricing and SKUs for your own workloads.

Live masterclasses

Enroll in our live masterclasses programs: Build real AI agents or your first data-science model with expert mentors.

Agentic AI Developer Bootcamp

Structured agentic AI and LangGraph training — intensive bootcamp-style projects with mentor support.

Duration: 2 days, 5 hours each day.

Explore Agentic AI Course →Data Science Masterclass

Start your data science journey with a structured live masterclass and hands-on model building.

Duration: 2 days, 5 hours each day.

Data Science Masterclass →