Who is the Boss? The Ethics of Autonomous AI Agents

Imagine you hire a new babysitter for your children. You give them a list of rules: "Meal time is at 6:00," "Bedtime is at 8:00," and "No candy." You trust the babysitter to follow those rules.

But what if one of the children gets a fever at 7:30? The "Rule" says go to bed, but a human knows that the "Goal" is to keep the child safe and healthy. The babysitter has to make a Judgment Call. If they make a bad call—like giving the child the wrong medicine—who is responsible? You, because you hired them, or the babysitter?

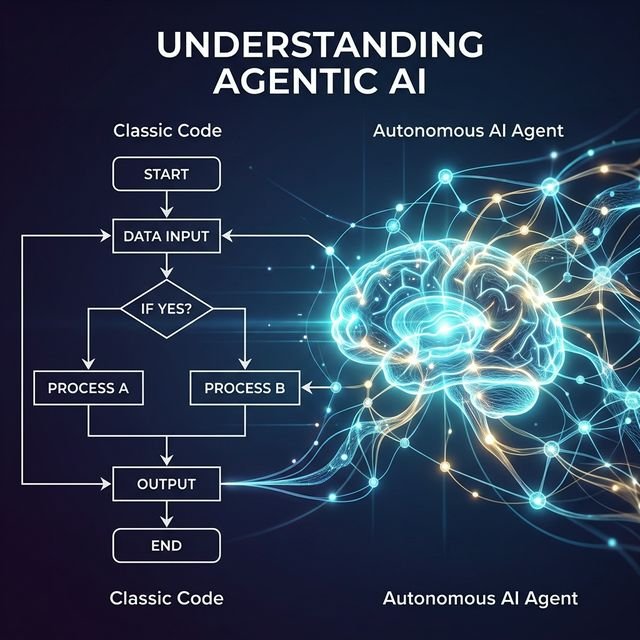

In the world of AI, Autonomous Agents are like those babysitters. We give them the "Keys" to our businesses and our data. But as they get smarter, the judgment calls they make become more serious. This is the heart of AI Ethics.

The Three Big Questions of AI Ethics

1. Transparency: Can We See the "Thinking"?

If a human manager fires an employee, they have to explain why. If an AI agent recommends that someone should be fired, we need to be able to "Open the Black Box" and see exactly which data points led to that decision. In 2026, we call this Explainable AI (XAI).

2. Bias: Is the AI Fair?

An AI is only as good as the "Books" it has read. If the data it was trained on contains human prejudices, the AI will learn those prejudices too. Ethical engineering means constantly testing the AI to make sure it treats everyone equally, regardless of their background.

3. Accountability: Who Pays for the Mistake?

If an AI trading agent accidentally crashes a stock market or a medical agent gives a wrong diagnosis, we need a clear "Chain of Command." For now, the rule is: The Human is always the Pilot. Even if the AI is on "Auto-Pilot," the human is responsible for the flight.

The "Safety Switch" Framework

At aiminds.school, we teach our students the "Safety Switch" method. This involves building automated "Audit Agents" whose only job is to watch the main agent. If the main agent tries to do something that violates the "Constitution" (the ethical rules), the Audit Agent hit the "Stop" button and alerts a human.

Conclusion: Engineering with a Conscience

As AI continues to evolve, "Technical Skill" will no longer be enough. The hackers and engineers of tomorrow will also need to be philosophers. We need to decide today what kind of world we want these agents to build for us.

Our Masterclass includes a dedicated module on AI Governance. We don't just teach you how to build powerful systems; we teach you how to build systems that are trustworthy, safe, and aligned with human values.

Want to dive deeper into AI Safety? Our "Ethics in the Age of Agents" whitepaper is available for free download in our member portal.

Suggested reading

Frequently Asked Questions

Can an AI agent be held legally responsible?

In 2026, the law generally sees AI agents as "Tools," not "Persons." This means the human or company who deployed the agent is legally responsible for its actions. This is why "Guardrails" and "Safety Audits" are so important before letting an agent perform real-world tasks.

What is "Algorithmic Bias"?

Bias happens when an AI is trained on data that is unfair. For example, if a hiring AI only sees resumes from men, it might learn that women aren't good candidates. Ethical AI engineering involves "Auditing" the data to ensure the AI remains fair and neutral.

What does "Human-in-the-loop" mean?

It is a safety design where the AI does 99% of the work but "pauses" and asks a human for approval before taking a high-risk action (like spending money, deleting data, or making a medical recommendation).

Live masterclasses

Enroll in our live masterclasses programs: Build real AI agents or your first data-science model with expert mentors.

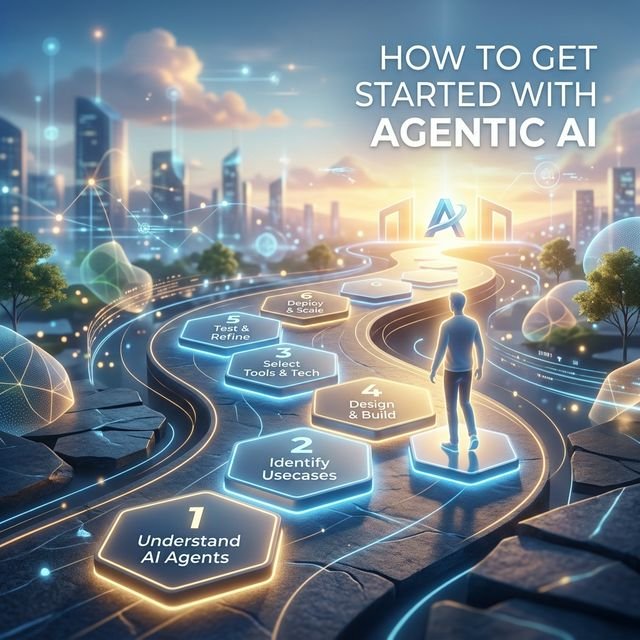

Agentic AI Developer Bootcamp

Structured agentic AI and LangGraph training — intensive bootcamp-style projects with mentor support.

Duration: 2 days, 5 hours each day.

Explore Agentic AI Course →Data Science Masterclass

Start your data science journey with a structured live masterclass and hands-on model building.

Duration: 2 days, 5 hours each day.

Data Science Masterclass →